-

-

Notifications

You must be signed in to change notification settings - Fork 15.9k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Tensorrt Export of yolov5x - insufficient memory error #9336

Comments

|

👋 Hello @Guruprasadhegde, thank you for your interest in YOLOv5 🚀! Please visit our ⭐️ Tutorials to get started, where you can find quickstart guides for simple tasks like Custom Data Training all the way to advanced concepts like Hyperparameter Evolution. If this is a 🐛 Bug Report, please provide screenshots and minimum viable code to reproduce your issue, otherwise we can not help you. If this is a custom training ❓ Question, please provide as much information as possible, including dataset images, training logs, screenshots, and a public link to online W&B logging if available. For business inquiries or professional support requests please visit https://ultralytics.com or email support@ultralytics.com. RequirementsPython>=3.7.0 with all requirements.txt installed including PyTorch>=1.7. To get started: git clone https://github.com/ultralytics/yolov5 # clone

cd yolov5

pip install -r requirements.txt # installEnvironmentsYOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including CUDA/CUDNN, Python and PyTorch preinstalled):

StatusIf this badge is green, all YOLOv5 GitHub Actions Continuous Integration (CI) tests are currently passing. CI tests verify correct operation of YOLOv5 training (train.py), validation (val.py), inference (detect.py) and export (export.py) on macOS, Windows, and Ubuntu every 24 hours and on every commit. |

|

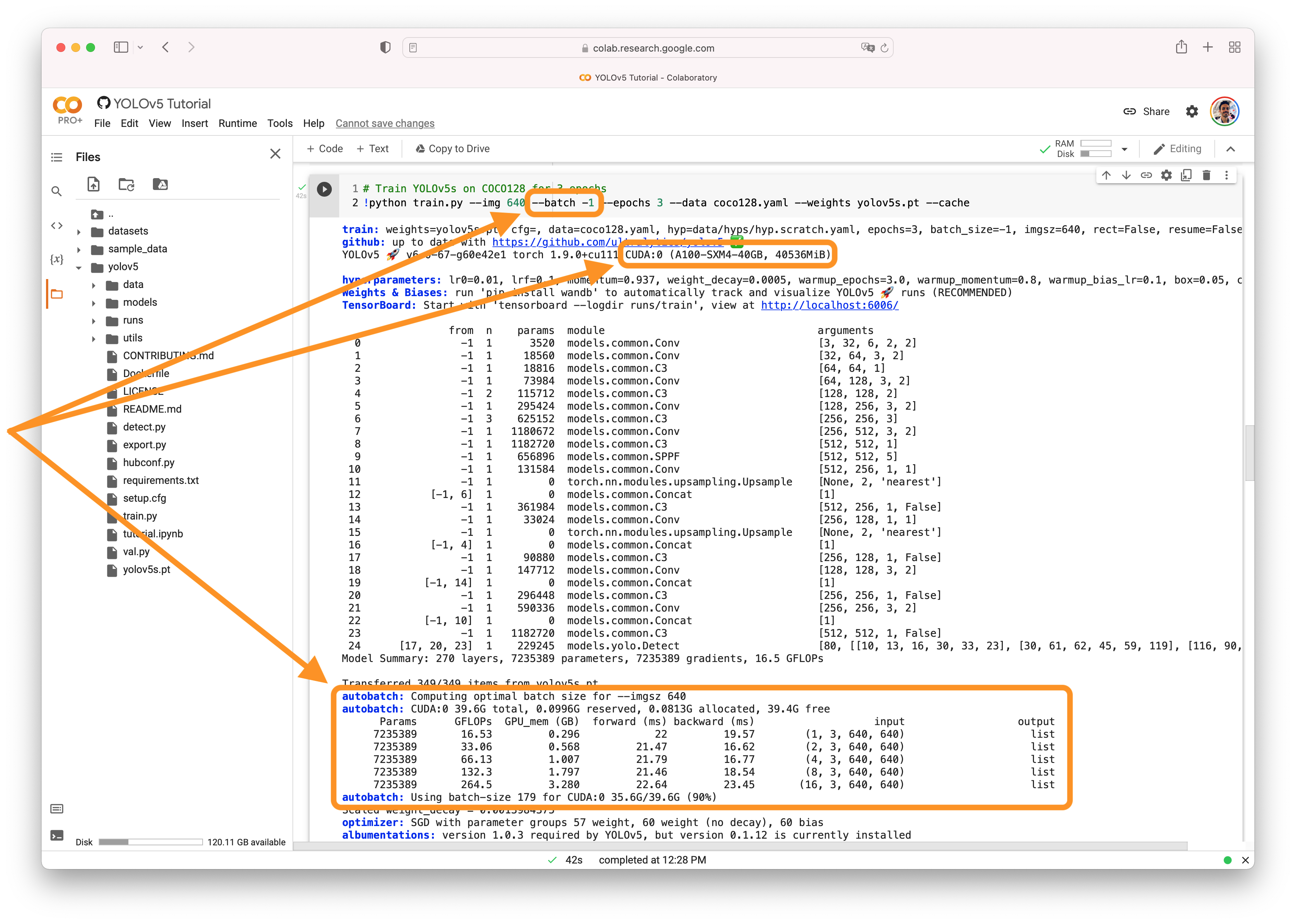

@Guruprasadhegde 👋 Hello! Thanks for asking about CUDA memory issues. YOLOv5 🚀 can be trained on CPU, single-GPU, or multi-GPU. When training on GPU it is important to keep your batch-size small enough that you do not use all of your GPU memory, otherwise you will see a CUDA Out Of Memory (OOM) Error and your training will crash. You can observe your CUDA memory utilization using either the CUDA Out of Memory SolutionsIf you encounter a CUDA OOM error, the steps you can take to reduce your memory usage are:

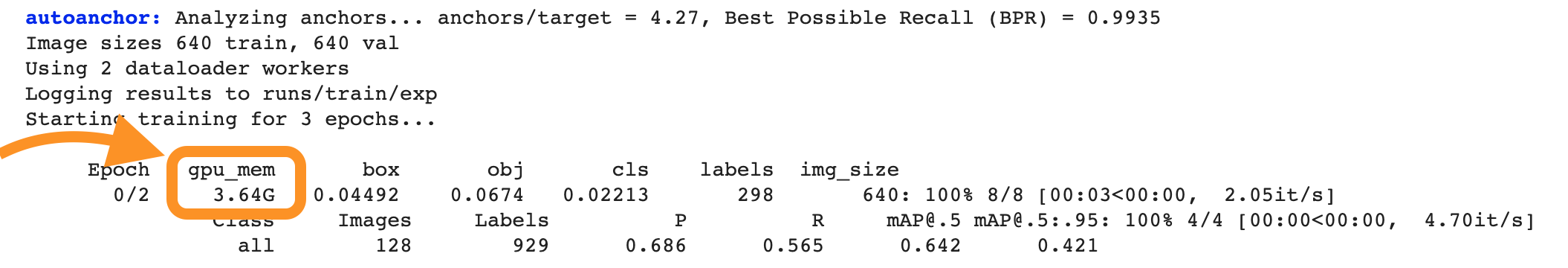

AutoBatchYou can use YOLOv5 AutoBatch (NEW) to find the best batch size for your training by passing Good luck 🍀 and let us know if you have any other questions! |

|

@glenn-jocher Thank you for your response! |

|

@Guruprasadhegde same rules apply to reduce CUDA usage: smaller model, smaller image size, --half, etc. Not very complicated. |

|

@glenn-jocher You are absolutely correct that if I reduce the batch size while exporting Tensorrt it does work. But here is what confuses me (maybe I am thinking about it the wrong way). I can run val.py with batch 16 that would mean that my GPU does fit the model and batch size. Now if I try to export the same model with the same batch size it errors out. I am not getting why there is CUDA error while exporting a trained model with exact same batch size that fits in my GPU. Of course the export works when I chose batch size 4, but doing this means I am not fully utilizing my GPU during inference |

|

@Guruprasadhegde yeah that is kind of confusing. It appears that TensorRT is using more memory during export to try to run many optimization strategies on the model to find out what works the fastest, so exporting uses more memory than normal inference. |

|

👋 Hello, this issue has been automatically marked as stale because it has not had recent activity. Please note it will be closed if no further activity occurs. Access additional YOLOv5 🚀 resources:

Access additional Ultralytics ⚡ resources:

Feel free to inform us of any other issues you discover or feature requests that come to mind in the future. Pull Requests (PRs) are also always welcomed! Thank you for your contributions to YOLOv5 🚀 and Vision AI ⭐! |

Search before asking

YOLOv5 Component

No response

Bug

I can run inference with batch 16 but when I try to export the same model to tensorrt engine with batch size 16 it gives me insufficient memory error.

Environment

No response

Minimal Reproducible Example

python export.py --weights custom.pt --include engine --device 0 --img 3288 --data custom.yaml --batch 16 --conf-thres=0.01

Additional

No response

Are you willing to submit a PR?

The text was updated successfully, but these errors were encountered: