GPU memory consumption #6107

Replies: 1 comment

-

|

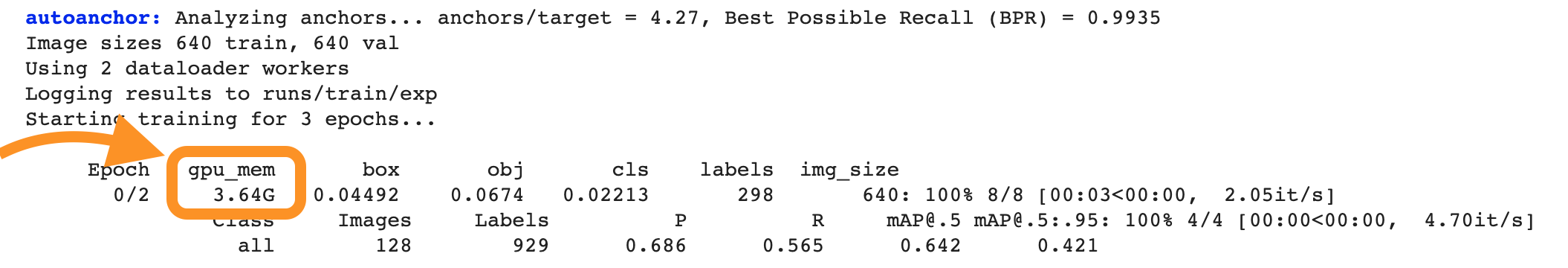

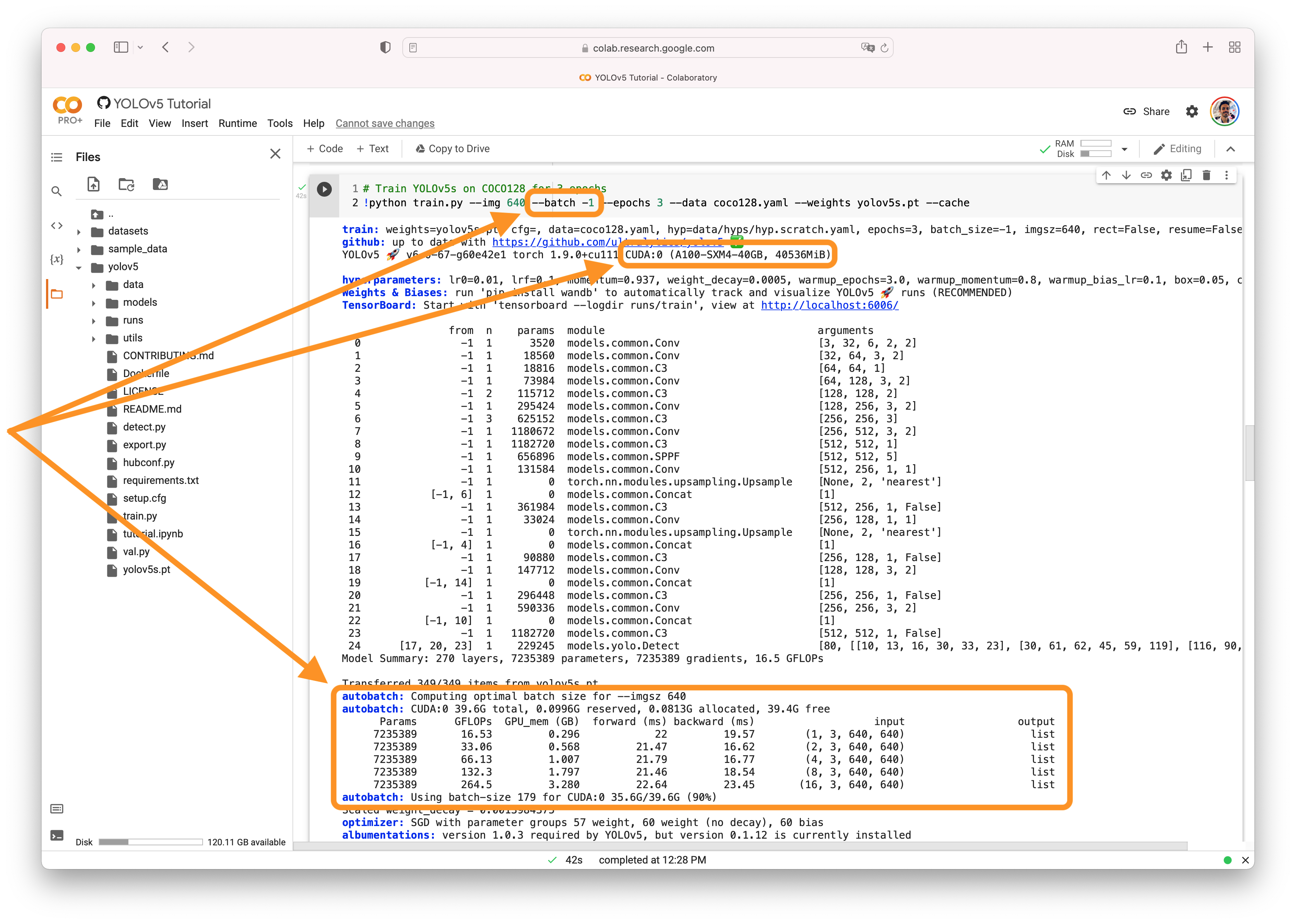

@revathib01 👋 Hello! Thanks for asking about CUDA memory issues. YOLOv5 🚀 can be trained on CPU, single-GPU, or multi-GPU. When training on GPU it is important to keep your batch-size small enough that you do not use all of your GPU memory, otherwise you will see a CUDA Out Of Memory (OOM) Error and your training will crash. You can observe your CUDA memory utilization using either the CUDA Out of Memory SolutionsIf you encounter a CUDA OOM error, the steps you can take to reduce your memory usage are:

AutoBatchYou can use YOLOv5 AutoBatch (NEW) to find the best batch size for your training by passing Good luck 🍀 and let us know if you have any other questions! |

Beta Was this translation helpful? Give feedback.

-

Hi,

Can you please help us with the following issues

1.I have trained yolov5x model with a custom dataset of 300 images with a batch size 10 for 25 epochs in Google Colab , But when I'm trying to increase the batch size, all the available ram is used and the session is getting crashed. Can you suggest how much GPU memory required for training a model with a minimum batch size of 32 for yolov5x model (the dataset might increase).

2.While executing detect.py with above trained weights in a local machine( with 4GB Geforce GTx1050 GPU), I observed that it is consuming 2.5 of 4GB memory. Can you let us know if there is any way to reduce memory consumption.

Beta Was this translation helpful? Give feedback.

All reactions