Yolov5 training problem #4656

Unanswered

dilsadunsal

asked this question in

Q&A

Replies: 1 comment 2 replies

-

|

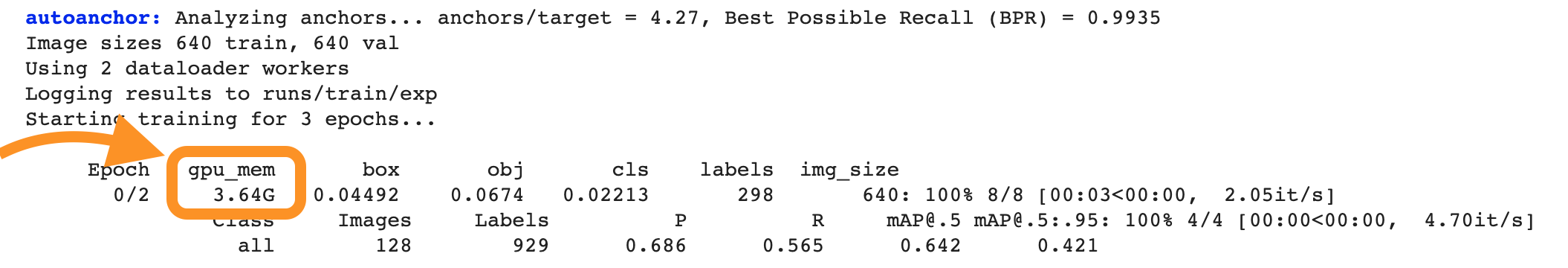

@siangfei 👋 Hello! Thanks for asking about CUDA memory issues. YOLOv5 🚀 can be trained on CPU, single-GPU, or multi-GPU. When training on GPU it is important to keep your batch-size small enough that you do not use all of your GPU memory, otherwise you will see a CUDA Out Of Memory (OOM) Error and your training will crash. You can observe your CUDA memory utilization using either the If you encounter a CUDA OOM error, the steps you can take to reduce your memory usage are:

|

Beta Was this translation helpful? Give feedback.

2 replies

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

-

Hello, I have trained Yolov5 with my own dataset on Colab. Hyperparameters; image size=640, batch size=16 with 50 epoch.

Now I would like to train same dataset with different hyperparameter; image size should be 576.

But it is failed. I received memory fault. Whats the reason of this problem?

Beta Was this translation helpful? Give feedback.

All reactions