+ +

+ + +

+

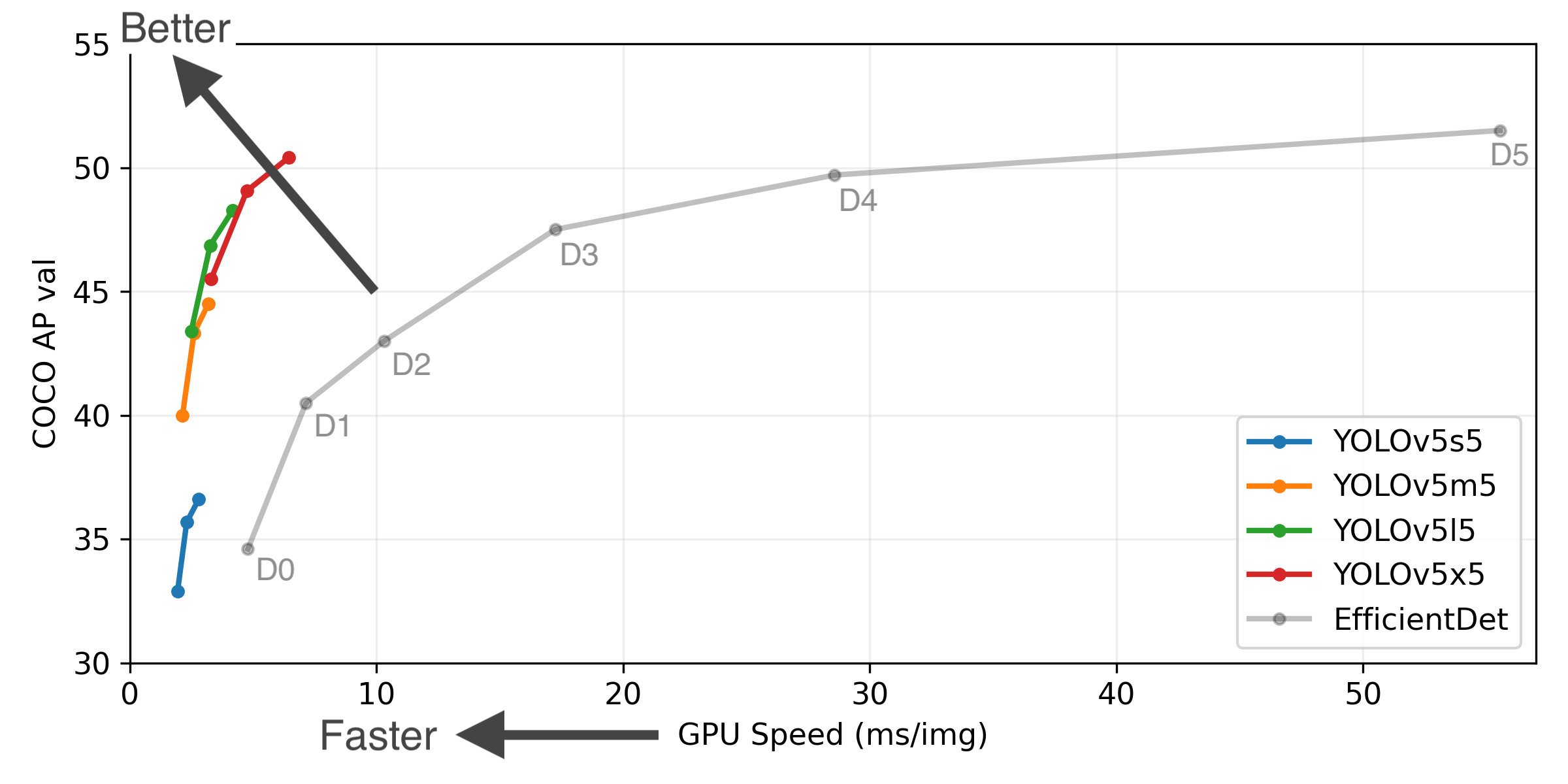

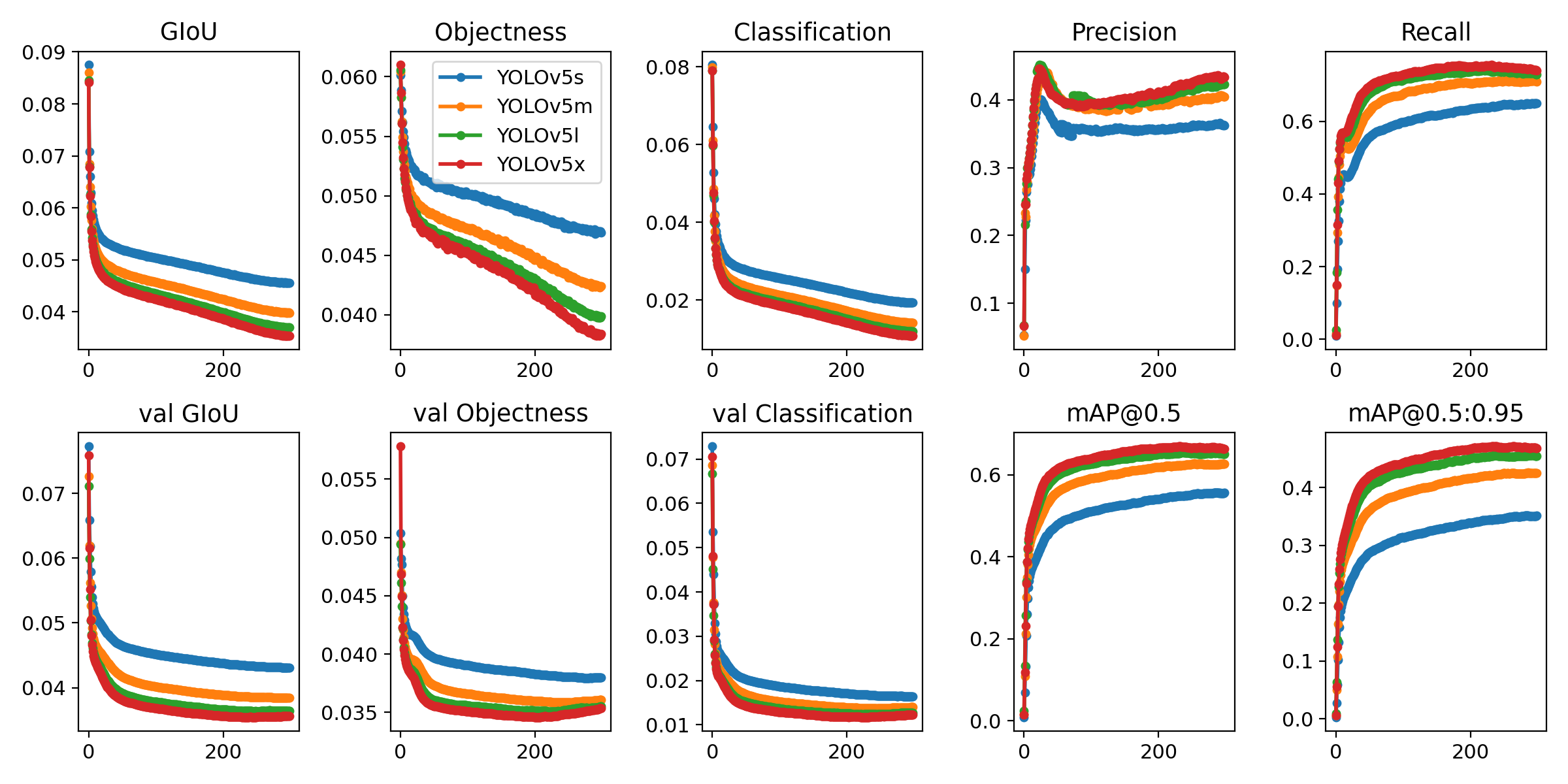

+YOLOv5 🚀 is a family of object detection architectures and models pretrained on the COCO dataset, and represents Ultralytics + open-source research into future vision AI methods, incorporating lessons learned and best practices evolved over thousands of hours of research and development. +

+ + + +

+

+  +

+  +

+  +

+  +

+  +

+

+

+

+

+ -

-### PyTorch Hub

-Inference with YOLOv5 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

-```python

-import torch

+##

-

-### PyTorch Hub

-Inference with YOLOv5 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

-```python

-import torch

+##  +

+  +

+  +

+  +

+  +

+  +

+  +

+