A Swift 4 framework for streaming remote audio with real-time effects using AVAudioEngine. Read the full article here!

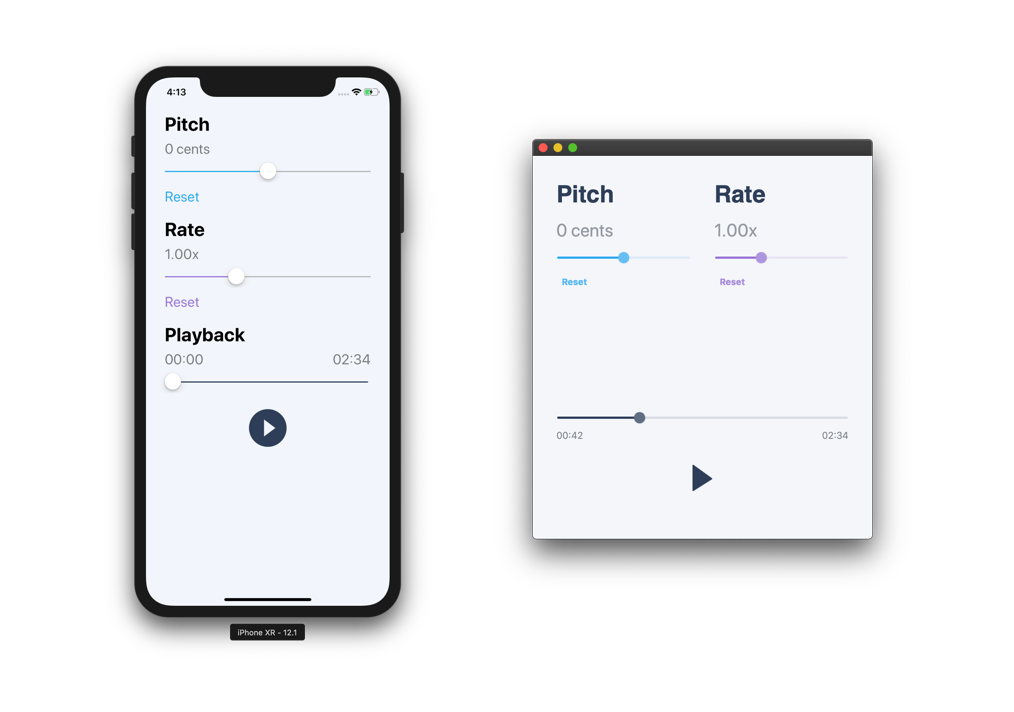

This repo contains two example projects, one for iOS and one for macOS, in the TimePitchStreamer.xcodeproj found in the Examples folder.

Because the AVAudioEngine works like a hybrid between the Audio Queue Services and Audio Unit Processing Graph Services we can combine what we know about each to create a streamer that schedules audio like a queue, but supports real-time effects like an audio graph.

At a high-level here's what we'd like to achieve:

Here's a breakdown of the streamer's components:

- Download the audio data from the internet. We know we need to pull raw audio data from somewhere. How we implement the downloader doesn't matter as long as we're receiving audio data in its binary format (i.e.

Datain Swift 4). - Parse the binary audio data into audio packets. To do this we will use the often confusing, but very awesome Audio File Stream Services API.

- Read the parsed audio packets into LPCM audio packets. To handle any format conversion required (specifically compressed to uncompressed) we'll be using the Audio Converter Services API.

- Stream (i.e. playback) the LPCM audio packets using an

AVAudioEngineby scheduling them onto theAVAudioPlayerNodeat the head of the engine.

In the following sections we're going to dive into the implementation of each of these components. We're going to use a protocol-based approach to define the functionality we'd expect from each component and then do a concrete implementation. For instance, for the Download component we're going to define a Downloadable protocol and perform a concrete implementation of the protocol using the URLSession in the Downloader class...read more